LAUGHING WEB DOT SPACE

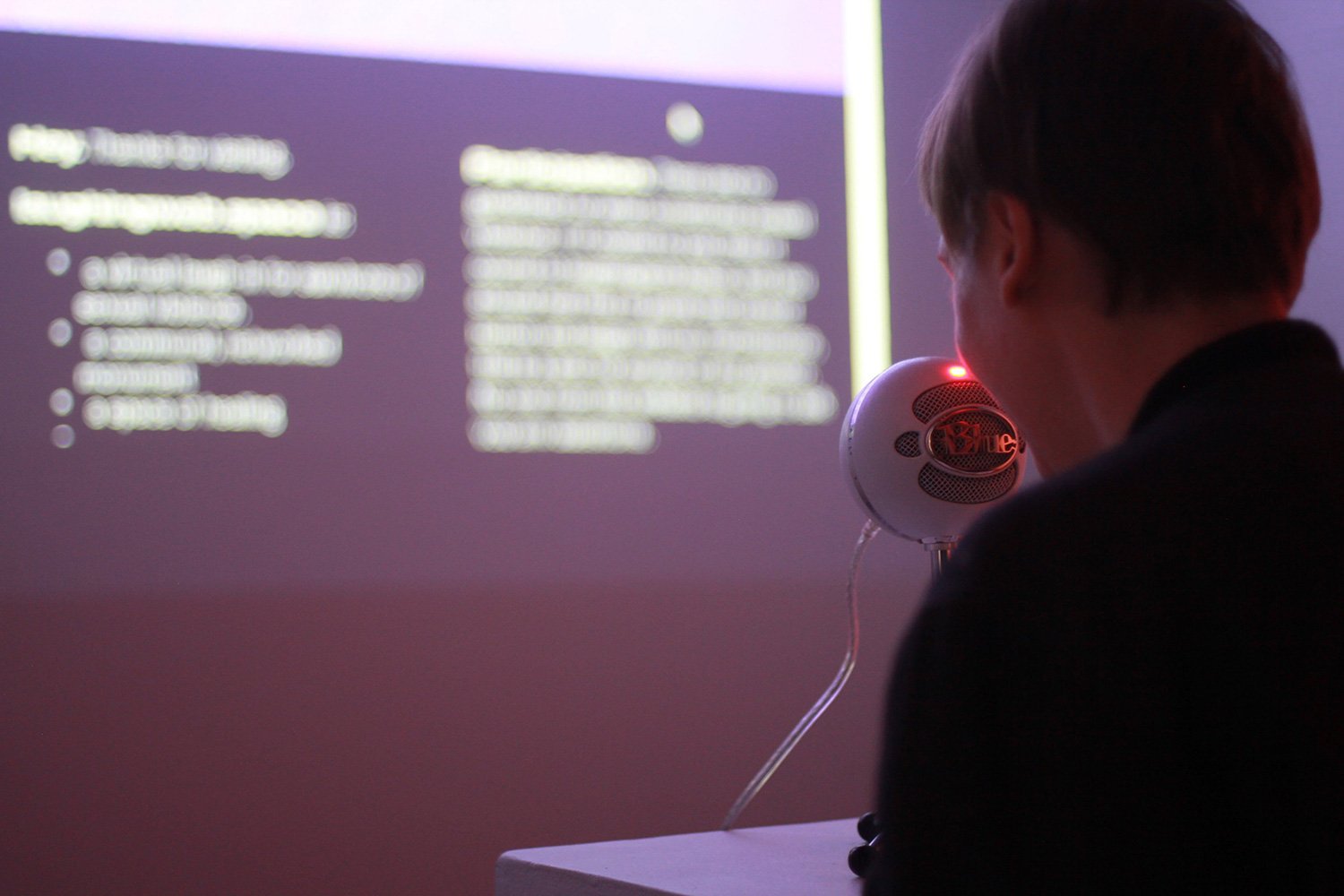

Installation detail of laughingweb.space (2018) by Erin Gee.

Exhibition at Eastern Bloc, Montreal. Photo by Anna Iarovaia.

2018

An interactive website and virtual laugh-in for survivors of sexual violence.

The URL: https://laughingweb.space

This website enables survivors to record and listen to the sounds of their laughter, and through the magic of the internet, laugh together. Visitors of any gender that self-identify as survivors are invited to use the website’s interface to record their laughter and join in: no questions asked. Visitors can also listen to previously recorded laughter on loop.

Why laughter? Laughter is infectious, and borne of the air we still breathe. We laugh in joy. We laugh in bitterness. We laugh awkwardly. We laugh in relief. We laugh in anxiety. We laugh because it is helpful for laugh. We laugh because it might help someone else. Laughing is good for our health: soothing stress, strengthening the immune system, and easing pain. Through laughter, we proclaim ourselves as more complex than the traumatic memories that we live with. Our voices echo, and will reverberate in the homes, public places, and headphones of whoever visits.

Dedicated to Cheryl L’hirondelle

This project was commissioned by Eastern Bloc (Montreal) on the occasion of their 10th anniversary exhibition. For this exhibition, Eastern Bloc invited the exhibiting media artists to present work while thinking of linkages to Canadian media artists that inspired them when they were young. I’m extremely honored and grateful for the conversations that Cheryl L’hirondelle shared with me while I was developing this project.

When I was just beginning to dabble in media art in art school, the net-based artworks of Cheryl L’hirondelle demonstrated to me the power of combining art with sound and songwriting, community building, and other gestures of solidarity, on the internet. Exposure to her work was meaningful to me – I was looking for examples of other women using their voices with technology. Skawennati is another great artist that was creating participative web works in the late 90s and early 2000s – you can check out her cyberpowwow here.

Credits

Graphic Design – Laura Lalonde

Backend Programming – Sofian Audry, Conan Lai, Ismail Negm

Frontend Programming- Koumbit

Special thank you to Kai-Cheng Thom, who with wisdom, grace, and passion guided me through many stages of this work’s development.

Exhibition History

October 3 -23, 2018 – Eastern Bloc, Montreal. Curated by Eliane Ellbogen

February 16, 2019 –The Feminist Art Project @ CAA Conference – Trianon Ballroom, Hilton NYC.

February 2019 – Her Environment @ Yards Gallery, Chicago. Curated by Chelsea Welch and Iryne Roh.

June 26 to August 11, 2019. SESI Arte Galeria, FILE festival, São Paulo, Brazil.

October 4-5, 2019. Video Presentation and exhibition at Sound::Gender::Feminism::Activism symposium, Tokyo. Click here to watch my video presentation

Links

Press

Fields, Noa/h. (2019). “Dangling Wires: Artists Examine Relationship with Technology in Entanglements.” Scapi Magazine (Chicago). https://scapimag.com/2019/02/05/dangling-wires-artists-examine-relationship-with-technology-in-entanglements/

Fournier, Lauren (2018). “Our Collective Nervous System.” Canadian Art. https://canadianart.ca/interviews/our-collective-nervous-system/

Berson, Amber (2018). “Amplification” Canadian Art. REVIEWS /