Audio Placebo Plaza

Poster for Audio Placebo Plaza: Montreal Edition (2021)

2021 / 2022

In June 2021 the trio transformed a former perfume shop in the St Hubert Plaza of Montreal into a pop up radio station, sensory room, therapist office, and audio production studio, uniting these spaces through the aesthetics of a sandwich shop or cafe to offer customizable audio placebo “specials” and “combos” to the public.

Founded upon principles of feminism, socialism, and audio production excellence, Audio Placebo Plaza invites everyday people to take appointments with artists to discuss how an audio placebo could help improve their lives. These appointments are entirely focused on the individual and are in themselves part of the process. Common topics of discussion included increasing productivity, self-esteem, self-care, social interactivity, brain hacking, mitigating insomnia, and pain management, but also one’s aural preferences, sensitivities, and curiosities. Intake sessions were conducted in a blended telematic/in-person structure to determine one’s familiarity and comfort levels with a variety of psychosomatic audio techniques including but not limited to soundscapes, binaural beats, simulated social interactions, positive affirmations, drone, participatory vocalization, ASMR, guided meditation and deep listening.

After the consultation is complete, team members met to discuss each participant’s case to fulfill their “prescription,” and also to divide the labor amongst the three creators. The collaborations are non-hierarchical, adaptive, and simultaneous: one might be working on up to four projects at a time, or trade tasks depending on one’s backlog of labor. Labor is divided into recording sounds, conducting intake sessions, writing scripts, performing spoken or sung content, writing music, editing and audio mixing, cleaning and maintaining the shared spares, and communicating with visitors or walk-ins.

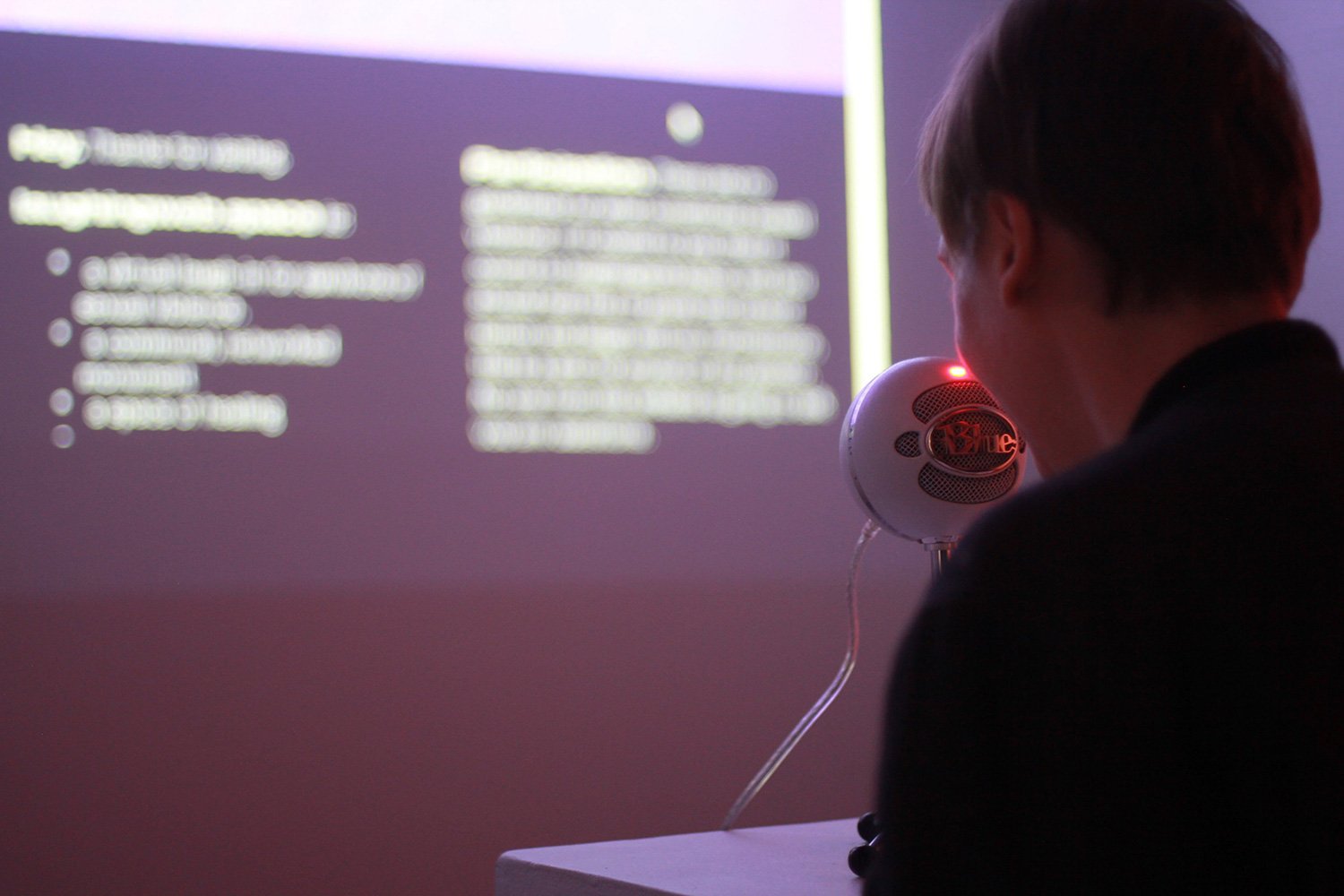

Audio Placebo Plaza Radio broadcast was facilitated through a pirate radio transmitter as well as an internet radio station. We broadcast completed placebos, shared technical advice and performance practices during informal critiques, work sessions in progress through the DAW, and sometimes informal chats with visitors. Intake sessions were also broadcasted (with the consent of visitors).

Through Audio Placebo Place, we explore and develop methods for sound and music that propose emotional labor, listening, collaboration and “music as repair” (see Suzanne Cusick, 2008) as key elements that shape the sonic-social encounter between artists and the public.

Can placebos help?

Does sound have the power to process complex emotions?

Can music give you what you need?

Is this even music?

The Montreal edition of Audio Placebo Plaza has been archived by Andy Stuhl at Wave Farm (NY, USA)

https://wavefarm.org/radio/archive/works/kdtgwn

We created a commemorative booklet for Audio Placebo Plaza: Montreal edition with writing by Budhaditya Chattopadhyay

https://audioplaceboplaza.com/Publication

Credits

Audio Placebo Plaza is a community sound art project conceived by Julia E Dyck, Erin Gee and Vivian Li .

Graphic design by Sultana Bambino.

In November 2022 Audio Placebo Plaza traveled to Karachi Pakistan as part of the Karachi Biennale.

Audio Placebo Plaza (APP) is a female and non-gender conforming collective of artists (Julia E. Dyck, Erin Gee, Vivian Li) focused on the expansion of intersectional feminist methods of care, emotional labor, collaboration, and community in sound art. APP considers “placebo” as a complex, open-ended, and optimistic conceptual framework for work that embraces irony, play, and co-performativity in psychosomatic sound art. Through performance and interactivity, APP engages with community members to discuss these topics, creating original audio artworks based on conversations in our healing space. We offer everyday people customized positive messages, audio creations, healing frequencies, binaural beats, and ASMR, responding to the needs of our community through a practice of radical sonic care. We promise our fullest intention.

https://audioplaceboplaza.com/

You can listen to the albums from the Montreal edition and the Karachi edition here:

https://audioplaceboplaza.bandcamp.com/

Audio Placebo Plaza – Pillow Talk Radio

https://www.instagram.com/p/CucUFrboaoU/?img_index=1

Press

https://www.thenews.com.pk/magazine/you/1014017-visionary-women

https://mmnews.tv/here-are-artworks-you-must-visit-at-karachi-biennale-2022/

https://www.thenews.com.pk/magazine/us/1008137-karachi-biennale-2022-where-art-meets-technology

Credits

Audio Placebo Plaza is a community sound art project conceived by Julia E Dyck, Erin Gee and Vivian Li .

Graphic design by Sultana Bambino.