In Bloom

In Bloom installation version (2024)

2023 / 2024

Bloom as an expression of fulfillment: a miracle of becoming, fullness.

In nature, the body is not just the self, but can also be understood as food, or fuel, for a larger motor of the living in an ecological relation. How can we know what it means, to be posthuman, or posthumanist, on this ontological level? This song is a conversation between bacteria, mold, fungus, and a recently dead human. These voices guide the listener through the after-death as their body decomposes and intimately enters the cycle of life.

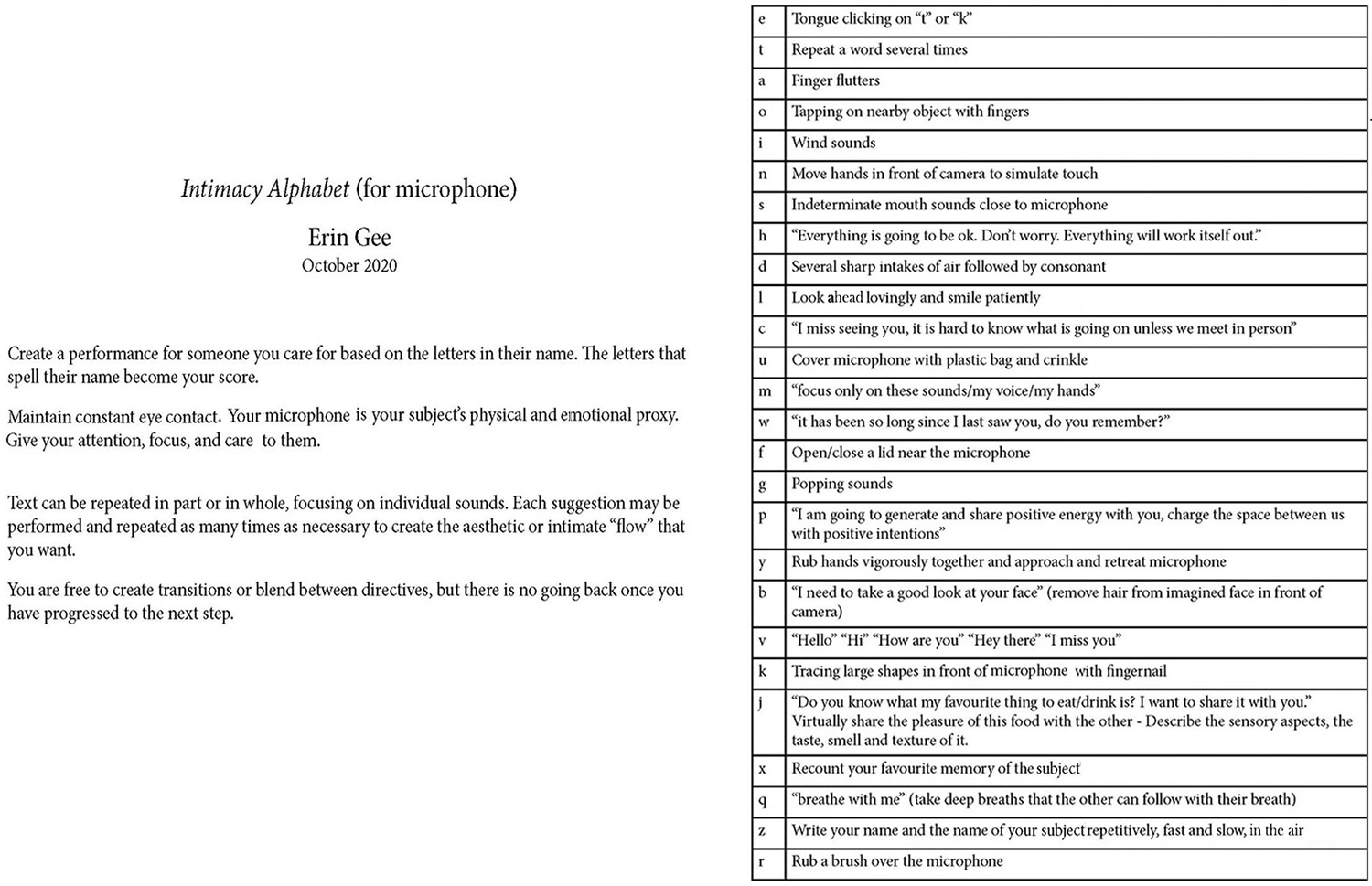

Performance Score for vocalist + instruments + tape

In Bloom was initially commissioned by Productions Totem Contemporain for the instrument bol, which could be considered as a kind of percussion instrument with a membrane and compressed air, and live spoken voice. This work was premiered with performer Francis Leduc.

The score for percussion and voice was later adapted as a score for wind ensemble for New Music Chamber Collective Alkali and featured Megan Johnson as a vocalist.

Exhibition / Performance History

Commissioned by Totem Electrique for bol, objects, and voice. Performed by Francis Leduc in Montreal QC, November 2023

Performance for bol, objects, and voice (Francis Leduc) in 16 channels as part of 50th anniversary of Sporobole artist-run centre in Sherbrooke, QC

Performed by Alkali Collective (Bass Clarinet, flute, saxophone, trombone, cello, percussion) + Megan Johnson (voice) in Hamilton NB 2024

Installation version for stereo audio recording and listening environment developed for Kamias Triennial (Toronto) 2024

Presented in Dolby surround as part of Project Immersed – Centre Phi, Montreal 2024.

Video

In Bloom Performance

VOCAL SOLOIST

Your voice is a portal to materialisms beyond our human senses, guiding the listener through the simultaneous care and brutal indifference of nature. You are a pink-collar worker of death, and you take great pride in your work. The vocalist can take some liberties with the text by repeating consonants or words for embellishment, inserting mouth sounds or unintelligible whispering, or switching between whispered and soft-spoken vocal styles. The rhythms of the text should more or less correspond to what is in the tape part: for this reason, I recommend memorizing the piece by rehearsing it on headphones while taking long walks in nature. The hands of the performer should gesticulate in imitation of ASMR performers online that trace shapes, manipulate objects, create finger flutters, touch an imaginary face in front of them, or touch their own face. You shouldn’t gesture constantly: try assigning different gestures to different sections of music to keep focus, sometimes stillness speaks for itself. Tracing shapes in different sizes and speeds, moving forward and backward, in spirals or from side to side, are all interesting options when performed with intention. Sections referencing the brushing of a face might benefit from a real brush prop, or you can simply brush with your fingers. These gestures reinforce the idea that there are real/microscopic hands breaking down the listener’s sensory organs, offering abstract simulations of impossible touch.

INSTRUMENTALISTS

You are a host of forest creatures, mushrooms, bacteria, slime mold, and life itself. You are not directly engaged with the listener but are more like a choir that comments on the action, listening and responding to the vocalist. Sometimes your presence is an imminent threat, but often a sensitive, delicate one. The sounds you make can range from swells of chords to subtle and delicate bursts of noise, to loud harsh interjections. Close micing the instruments to pick up on subtle sounds of key clicks, air in the instrument, singing into the instrument, or even tapping or touching the instrument offers more creative options that are in tune with the sonic inspiration of the work. Be careful to not sonically overwhelm the soloist when they are speaking.

Score for wind ensemble and voice

Please contact the artist to access the score for percussion instruments and voice.

Audio installation

The work was adapted into an intimate listening installation for headphones, custom printed bedsheet, linen wrap, and earth installation as part of the Kamias Triennial 2024 at Gallery TPW, Toronto, curated by Patrick Cruz, Su-Ying Lee, and Karie Liao.

The work has also been adapted into Dolby surround sound for Centre Phi’s Habitat Sonore space as part of the programming of Project Immersed 2024.

In Bloom Audio preview