Swarming Emotional Pianos

Swarming Emotional Pianos (2012 – ongoing)

Aluminium tubes, servo motors, custom mallets, Arduino-based electronics, iCreate platforms

Approximately 27” x 12” x 12” each

2012

A looming projection of a human performer surrounded by six musical chime robots: their music is driven by the shifting rhythms of the performer’s emotional body, transformed into data and signal that activates the motors of the ensemble.

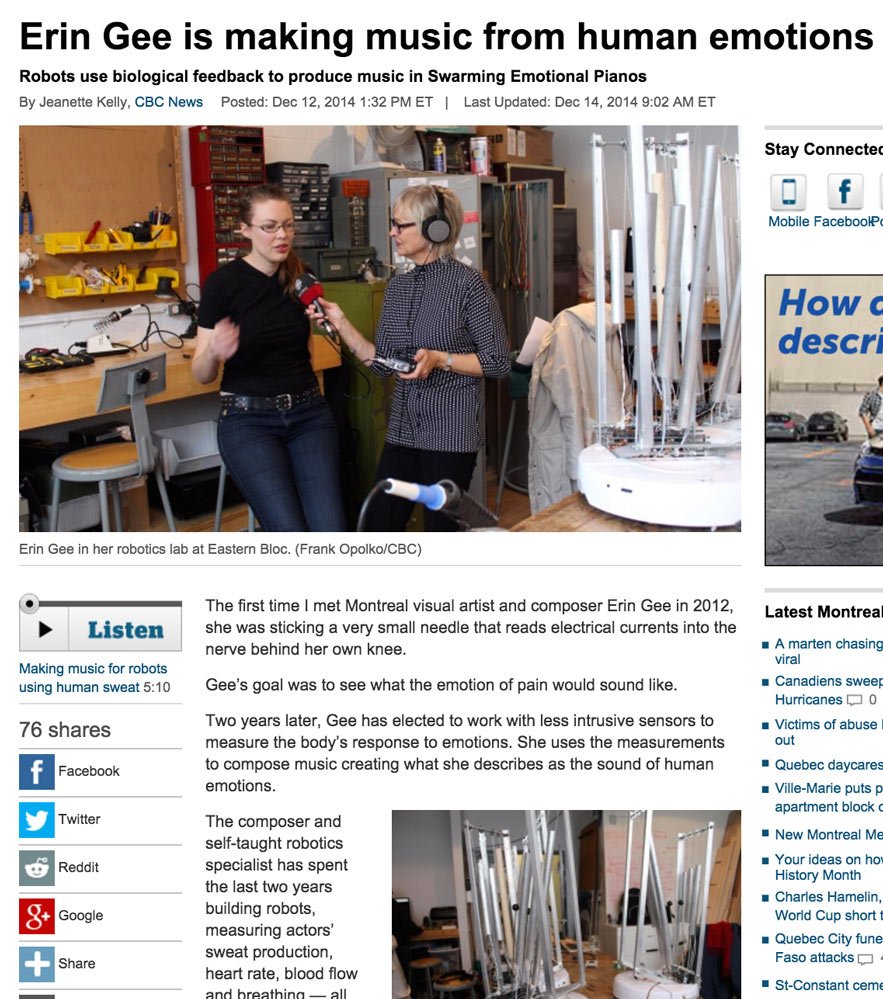

Swarming Emotional Pianos is a robotic installation work that features performance documentation of an actress moving through extreme emotions in five minute intervals. During these timed performances of extreme surprise, anger, fear, sadness, sexual arousal, and joy, Gee used her own custom-built biosensors to capture the way that each emotion affects the heartbeat, sweat, and respiration of the actress. The data from this session drives the musical outbursts of the robotics surrounding the video documentation of the emotional session. Visitors to this work are presented with two windows into the emotional state of the actress: both through a large projection of her face, paired with stereo recording of her breath and sounds of the emotional session, and through the normally inaccessible emotional world of physiology, the physicality of sensation as represented by the six robotic chimes.

Micro bursts of emotional sentiment are amplified by the robots, providing an intimate and abstract soundtrack for this “emotional movie”. These mechanistic, physiological effects of emotion drive the robotics, illustrating the physicality and automation of human emotion. By displaying both of these perspectives on human emotion simultaneously, I am interested in how the rhythmic pulsing of the robotic bodies confirm or deny the visibility and performativity of the face. Does emotion therefore lie within the visibility of facial expression, or in the patterns of bodily sensation in her body? Is the actor sincere in her performance if the emotion is felt as opposed to displayed?

Custom open-source biosensors that collect heartrate and signal amplitude, respiration amplitude and rate, and galvanic skin response (sweat) have been in development by Gee since 2012. Click here to access her GitHub page if you would like to try the technology for yourself, or contribute to the research.

Credits

Thank you to the following for your contributions:

In loving memory of Martin Peach (my robot teacher) – Sébastien Roy (lighting circuitry) – Peter van Haaften (tools for algorithmic composition in Max/MSP) – Grégory Perrin (Electronics Assistant)

Jason Leith, Vivian Li, Mark Lowe, Simone Pitot, Matt Risk, and Tristan Stevans for their dedicated help in the studio

Concordia University, the MARCS Institute at the University of Western Sydney, Innovations en Concert Montréal, Conseil des Arts de Montréal, Thought Technology, and AD Instruments for their support.

Videos

Swarming Emotional Pianos (2012-2014)

Machine demonstration March 2014 – Eastern Bloc Lab Residency, Montréal

Swarming Emotional Pianos (2012-2014)

Machine demonstration March 2014 – Eastern Bloc Lab Residency, Montréal